Blog Author: Yaqi Liu

Generative AI has become widely used among young people, and the rise of sexualised deepfake abuse has revealed serious issues. This week, we were delighted to invite Ruby Sciberras, Prof. Tanya Horeck, and Prof. Jessica Ringrose to the UCL FEEL to discuss the harms experienced by young people and the potential actions that can be taken in an increasingly complex AI driven digital environment.

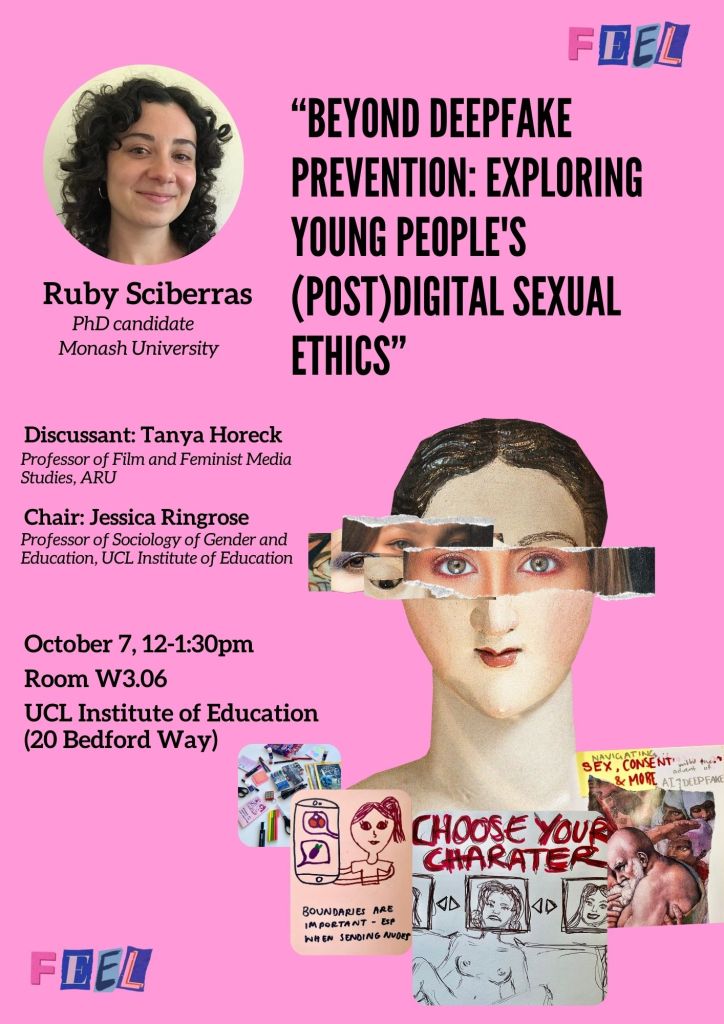

PhD Candidate: Ruby Sciberras

Beyond deepfake prevention: exploring young people’s digital sexual ethics

Ruby Sciberras opened her presentation by citing news reports from ABC News Australia, which showed that some schools and police had received reports of female students being abused by sexualised deepfake images. Several students involved had been suspended, and the incidents sparked intense debate among the public and various stakeholders (ABC News, 2024; ABC News, 2025).

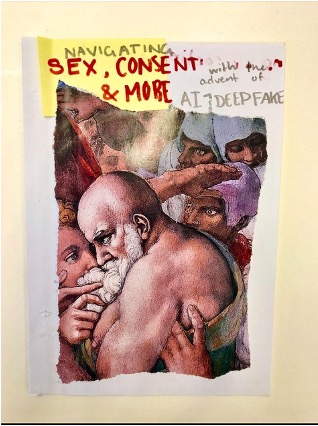

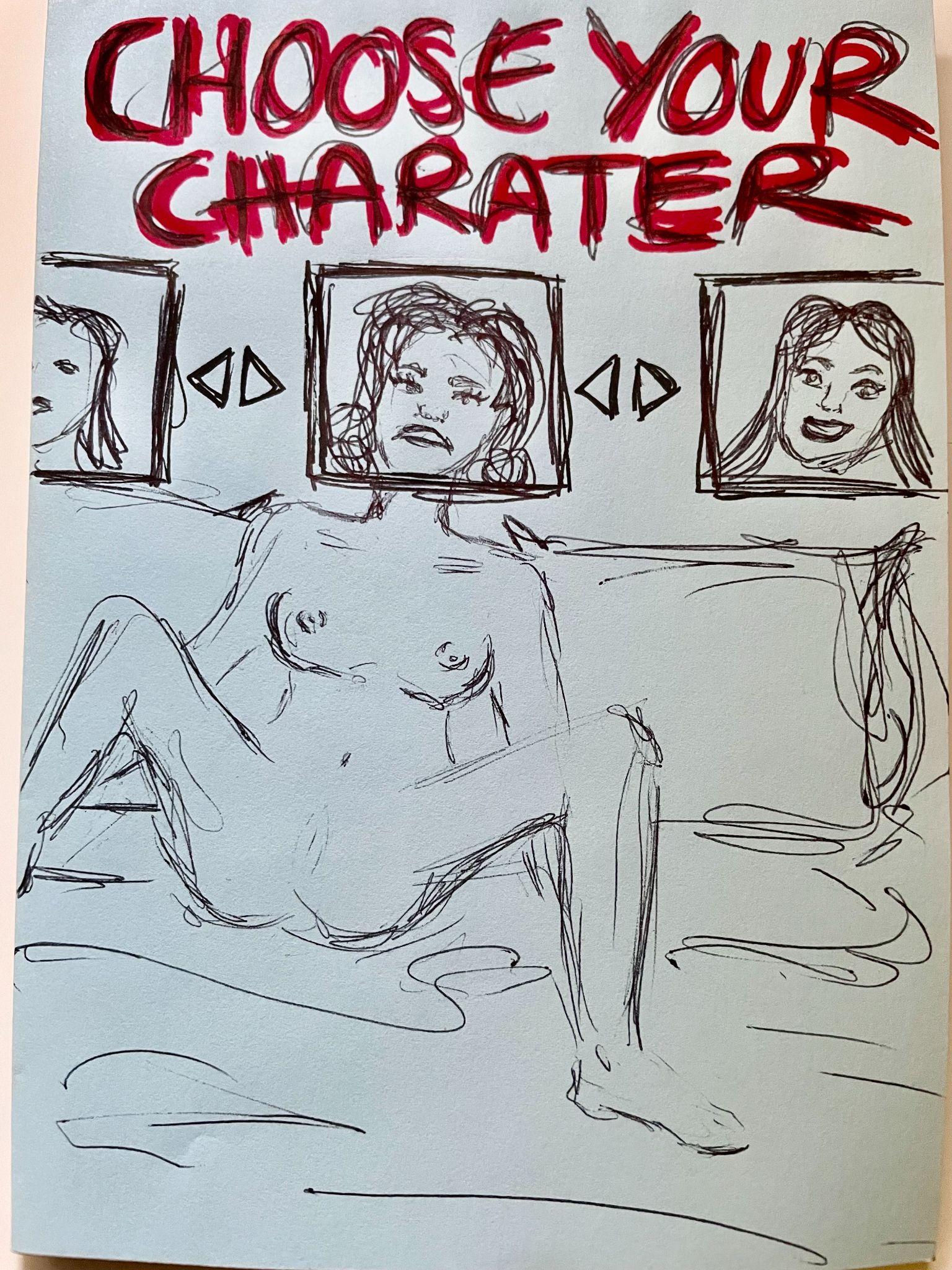

Based on these pressing issues, Ruby conducted her research using arts-based focus groups with young people aged 16 to 22 in Melbourne, Australia. Participants were asked about their negative perceptions of sexualised deepfakes and the positive potential of digital spaces to portray agentic feminine sexuality.

Centering on the primary questions of how young people feel and resist the emergence and spread of deepfakes, and how they draw on existing digital practices (such as sexting) to develop a renewed “digital sexual ethics” that could be applied to deepfakes, Ruby presented her initial findings.

Specifically, participants expressed concern and fear toward deepfakes, recognizing the importance of taking them seriously while also engaging in critical thinking. They had internalized risk and fear based educational messages, which often reinforce sexual stigma, taboo, and shame, thereby exacerbating image-based sexual abuse (IBSA). In the discussions of ethical digital sexual interactions, participants emphasized the importance of specific consent, respect for boundaries, and safety.

Professor Tanya Horeck and Professor Jessica Ringrose

Strengthening Prevention Action Against Non-Consensual Intimate Image Abuse (NCII) and Deepfakes: Supporting Teens and Educators

After Ruby, Prof. Horeck presented her research with Prof. Ringrose on young people’s experiences of technology-facilitated gender-based violence during COVID-19. The findings revealed that 48% of teenagers had experienced at least one form of technology-facilitated sexual violence, and 27% experienced at least one type of image-based sexual harassment and abuse (IBSHA). Among those affected by IBSHA, 100% participants reported that such experiences increased during COVID-19.

Building on these findings, Prof. Horeck highlighted that the growing deepfake sexual abuse in schools requires urgent attention. The Internet Matters Report (2024) clearly stated that only 11% of children aged 13-17 have been taught about deepfakes in school and teenagers see nude deepfake abuse as worse than sexual abuse featuring real images, as it involves a loss of autonomy and awareness, anonymous perpetrators, manipulated appearances, and fears that others might believe the image is real.

With these concerns, Prof. Horeck further shared her work with focus groups involving educational leaders and a youth advisory board, aimed at exploring how to collaboratively foster a safer and more ethically conscious digital environment, navigating both school policy perspectives and young people’s lived experiences.

Taken together, these talks highlight the significant challenges brought by “AI+”, especially as this rapidly evolving technology becomes increasingly accessible and easy to produce, yet still lacks effective multi-stakeholder regulation. Now more than ever, it is imperative to establish educational standards that equip young people with essential digital literacy and a strong sense of digital sexual ethics.

References: